Value investing had a singularly bad run from 2007 to 2020. (And it hasn’t done great since 2020, either.) Is that because value investing is broken, or did it simply hit a streak of horrendous luck?

Skeptics of value investing have made many claims about why value investing doesn’t work anymore, but these claims tend to be light on evidence. Value investing proponents have empirically researched most of these claims and found that they don’t stand up to scrutiny.

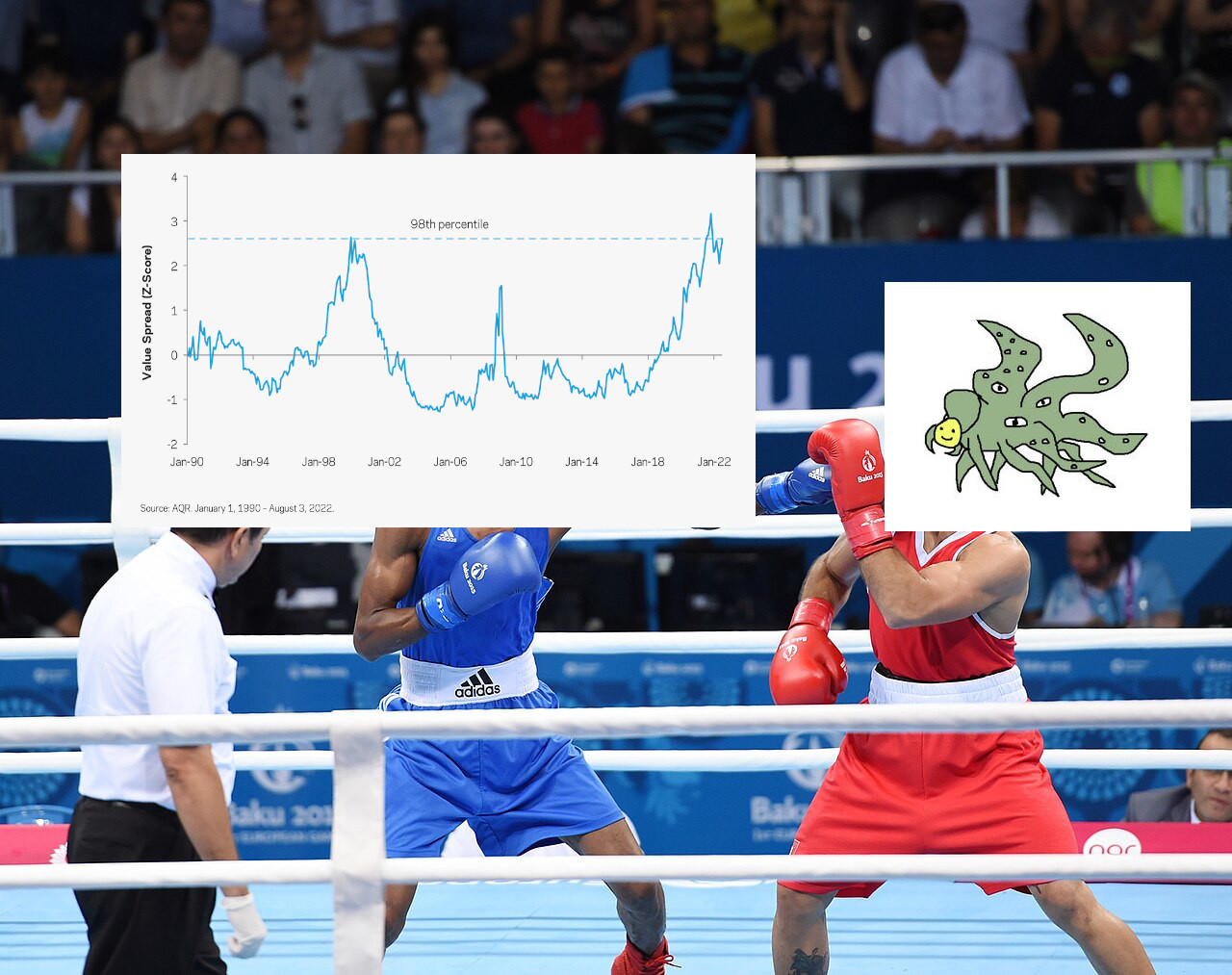

The poor performance of the value factor was not primarily driven by weakening fundamentals, but by the widening of the value spread. A wider value spread makes value investing look more attractive going forward, not less.

What's the value spread?

Value stocks are defined using the ratio of a stock’s price to some fundamental metric—for example, earnings, book value, or cash flow. If we use earnings as the metric, then value stocks are those with low P/E ratios and growth stocks are the ones with high P/Es.

The value spread is the ratio of price-to-fundamental ratios between growth stocks and value stocks. For example, if growth stocks have an average P/E of 30 and value stocks have an average of 15, then the value spread is 30/15 = 2.

All else equal, a wider value spread is good for value because you’re buying the same fundamentals at a lower price. However, a widening spread is bad for value because it means value stocks are declining (relative to growth stocks). This is analogous to how bond investors like when bond yields are high, but they lose money when yields are increasing.

I wouldn’t dismiss value investing on the basis of poor recent performance.

However, there’s a potentially strong argument against value investing that remains unrefuted.

Historically, the structural return of the value factor—the component of return that comes from company fundamentals, rather than changes in the value spread—was about 4–6%. But over the past two decades, that number has averaged a mere 1%. Unlike with the value spread, a muted structural return does not imply higher future expectations for value investing.

Continue reading