What Are the Best TV Shows (According to IMDb Episode Ratings)?

Recently, I was browsing IMDb’s list of top-rated TV shows:

According to IMDb ratings, Planet Earth II is the second-best TV show of all time, with 9.5 stars out of 10. But if you look at the ratings of each individual episode, they range from 6.8 to 7.91:

In general, the rating of a TV show usually differs from the average rating of that show’s episodes. What does the list of top TV shows look like if we sort by average episode rating instead of show rating? Perhaps voters have different motivations when they’re rating shows than when they’re rating individual episodes, and it could be interesting to see how the ratings differ.

So I downloaded the IMDb public database to find out2.

This list shows the top 250 TV shows, ordered by average episode rating: (click the button to expand the list)

We immediately run into a problem with this list: it’s dominated by little-known shows that only have about five votes per episode. If an episode has five votes, even if they’re all 10-star ratings, I wouldn’t feel confident that it really deserves 10 stars–perhaps if more people watched the show, most of them wouldn’t like it.

There’s a known solution to this problem, which IMDb used to implement. (Their new ranking algorithms are more sophisticated (and secret), but the old system is good enough for us.)

Intuitively, the more votes a show has, the more trustworthy its rating. We can formalize the intuition with this formula:

where

`r` is the unweighted rating of the episode;

`c` is the average rating across all episodes;

`v` is the number of votes;

`m` is the vote minimum.

In effect, it’s as if we give every TV episode m extra votes at the average rating. If we use m = 1000, then an episode with only five votes will have a weighted score that’s close to the average across all episodes; whereas for an episode with 10,000 votes, the 1000 extra votes won’t matter much.

This table displays the top 250 TV shows using this weighting algorithm, where m = 1000 and the average episode rating is 7.4. For comparison, I calculated the list of top TV shows using the same weighting algorithm3, but with a 25,000-vote minimum (shows have a higher vote minimum because they tend to get a lot more votes than episodes). The table displays the episode-based ranking on the left and the show-based ranking on the right. Click “Expand” to view:

How does this compare to the original IMDb top 250 TV? Some general observations:

- Top shows on IMDb’s show-rating list generally appear near the top of my episode-rating list.

- Mini-series usually receive substantially lower episode ratings than show ratings. On the show-rating list, seven of the top 10 are mini-series (Chernobyl, Band of Brothers, Planet Earth, Cosmos, Blue Planet II, Our Planet), but only two make it onto the episode-rating list (Chernobyl and Les Synaudes).

- The episode-rating list lets some obscure shows sneak to the top. Vader: A Star Wars Theory Fan Series gets the #12 spot. My guess is that’s because it only has one episode, and because the demographic that watches the show is disproportionately likely to vote on IMDb. Perhaps we could correct for this by using an episode-weighting algorithm similar to the way we do vote-weighting, where the algorithm is more willing to give a show a high rating if it has a lot of episodes.

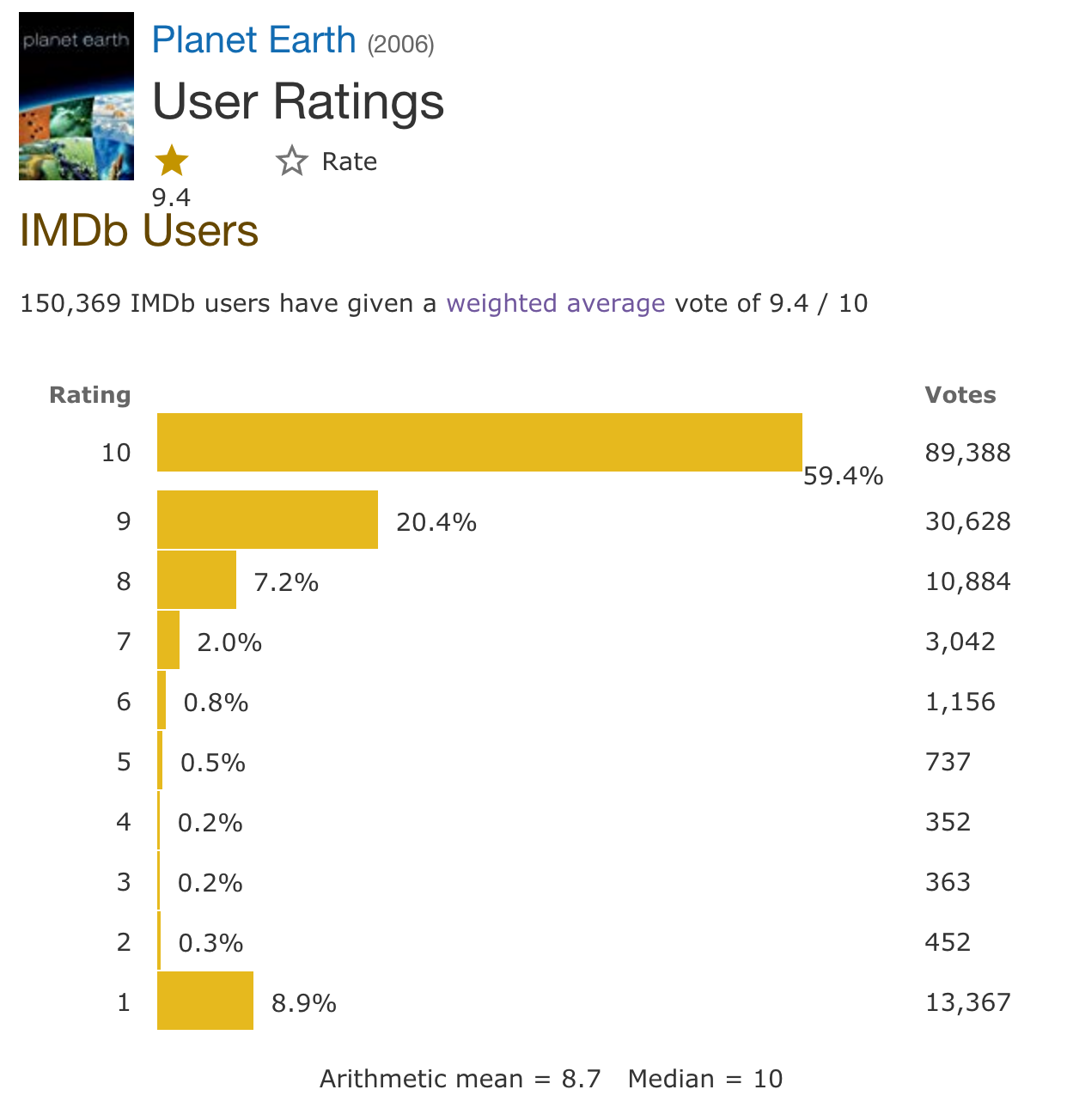

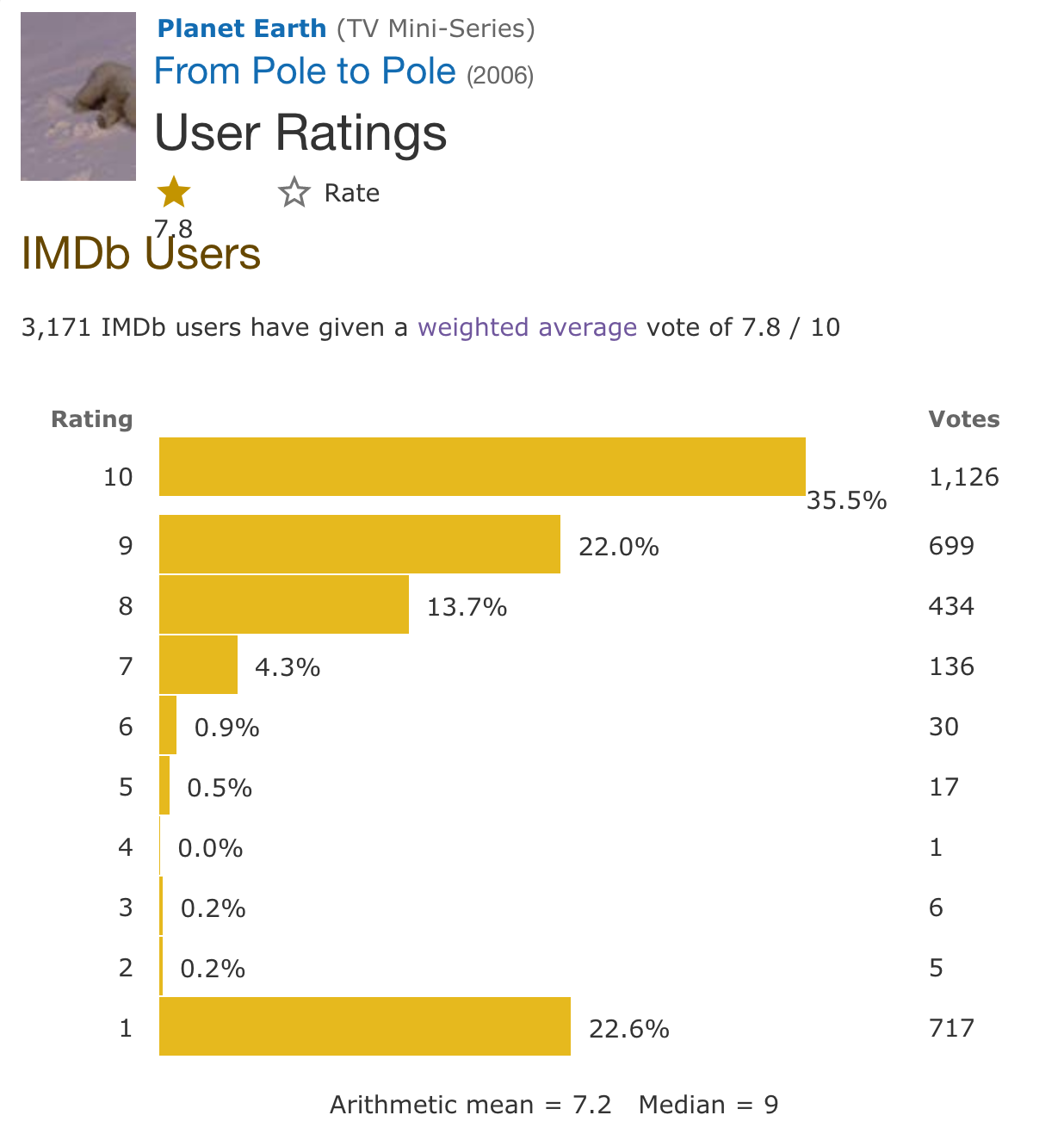

If we look at vote distributions, it appears that episodes are more vulnerable to vote stuffing than shows. Compare the vote distribution for Planet Earth versus the highest-rated single episode (“Pole to Pole”):

The show itself has 8.9% one-star reviews, but this episode has 22.6%. I find it difficult to believe that 22.6% of viewers hated the episode that much. More likely, some groups of people are trying to manipulate IMDb ratings by giving mass one-star reviews. And perhaps they try harder on episode ratings than on show ratings, or shows are harder to manipulate because they get more organic votes, or IMDb’s anti-vote stuffing algorithms are more effective for shows than for episodes.

What if we used a lower vote minimum?

I originally used 1000 as the vote minimum because it’s a reasonable round number, and it looks fairly similar to how IMDb’s weighting works. But there’s an argument that 1000 is too high. Plenty of fairly popular shows have episodes with fewer than 1000 total votes–people on IMDb tend to rate movies and shows much more often than they rate single episodes.

There’s a tradeoff in deciding how strong our vote weighting should be. If we make the prior too strong by setting a high vote minimum, small but good shows get crowded out by popular shows that might not be as high-quality. But if we set too low a vote minimum, the top list becomes dominated by obscure shows that probably aren’t actually good.

We can look at how the top list changes when we reduce the vote minimum from one thousand to one hundred. For comparison, the left two columns show the shows and ratings with a 100-vote minimum, and the right two columns use the 1000-vote minimum that our previous list used.

Conclusion

Any rating system inevitably has bias. When people rate TV shows on IMDb, they might be rating how much they like the idea of the show more than how much they actually like the show, itself. They’re probably less likely to do this for individual episodes because it’s easier to rate an episode based on the actual experience you had watching it. On the other hand, the episode-based ranking appears vulnerable to vote stuffing. It also looks like people are much more likely to rate episodes that they love: for example, the top-rated episode of Attack on Titan has over 20,000 votes, but a typical episode only has about 2000. And both of these systems share a big source of bias: the ratings come from IMDb users, and IMDb users do not form a representative sample of the TV-watching population.

Given that every system has its own bias, I believe it’s valuable to look at different rankings, consider their differences, and think about how their biases may or may not reflect your own preferences so that you can find the rating system that’s the most helpful for you.

Notes

-

In this particular case, the low ratings probably come from vote stuffing–the episodes have way more 1-star ratings than you’d naturally expect them to get. ↩

-

Database downloaded and screenshots taken on 2019-07-12. ↩

-

This list differs somewhat from IMDb’s official top 250 list because IMDb uses more complex algorithms to try to counteract vote stuffing. ↩