How valuable are weak AI safety regulations?

Image credit: Jebulon

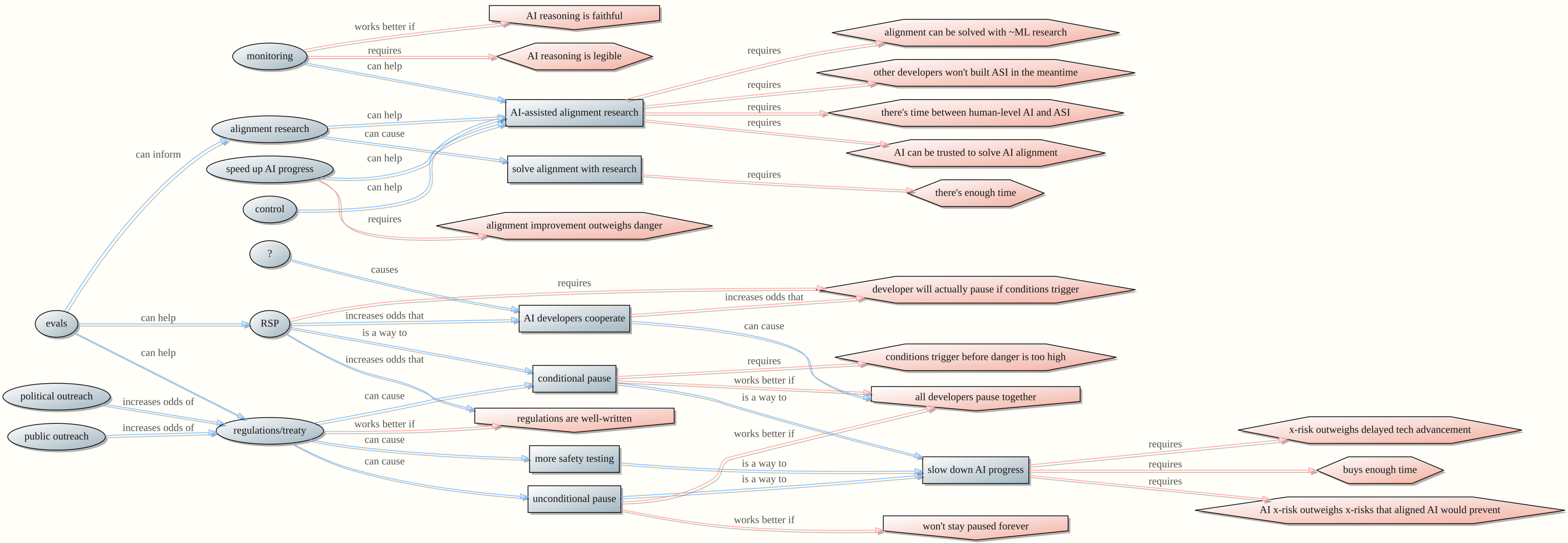

To prevent superintelligent AI from killing everyone, I would like there to be a strong international agreement banning the development of ASI until it can be proven safe. But that sort of agreement requires a lot of political buy-in and coordination. In the meantime, it may be easier to get light-touch AI safety regulations passed. To what extent do weak regulations decrease extinction risk?

In this post:

- Part I discusses routes by which weak regulations can reduce extinction risk. [More]

- Part II considers some downsides of weak regulations. [More]

- Part III reviews specific categories of weak regulation and how they might reduce risk. [More]